Problem Capturing right Host Primary Disk with INTEL VROC RAID1

-

@george1421 Let me know how that goes. I remember that I had to patch something on buildroot for partclone and ncurses. A new patch may be needed if they made changes for 2024.05.1.

I can give it a try as well.Are you trying to build the newest version of partclone?

-

@rodluz I got everything to compile, but it was a pita.

I did compile the 0.3.32 version of partclone and 4.3 of mdadm on buildroot 2024.05.1. The new compiler complains when package developer references files outside of the buildroot tree. Partclone referenced /lib/include/ncursesw (the multibyte version of ncurses). Buildroot did not build the needed files in the target directory. So to keep compiling I copied from by linux mint host system the files it was looking for into the output target file path then I manually updated the references in the partclone package to point to the output target. Not a solution at all but got past the error. Also partimage did the same thing but references and include slang directory. That directory did exists in the output target directory, so I just updated the package refereces to that location and it compiled. In the end the updated mdadm file did not solve the vroc issue. I’m going to boot next with a linux live distro and see if it can see the vroc drive, if yes then I want to see what kernel drivers are there vs fos.

-

@george1421

In addition to this topic

I have it working with FOG version 1.5.10.41 (dev version)

Kernel 6.6.34

Init 2024.02.3stable (also with latest kernel/init) is not working.

-

T Tom Elliott referenced this topic on

T Tom Elliott referenced this topic on

-

@george1421

to be adding some to this topic since our system was broken again with the same problems.I updated recently to newer fog version (1.5.10.1751 and today to 1.5.10.1754) and that seems to break it again.

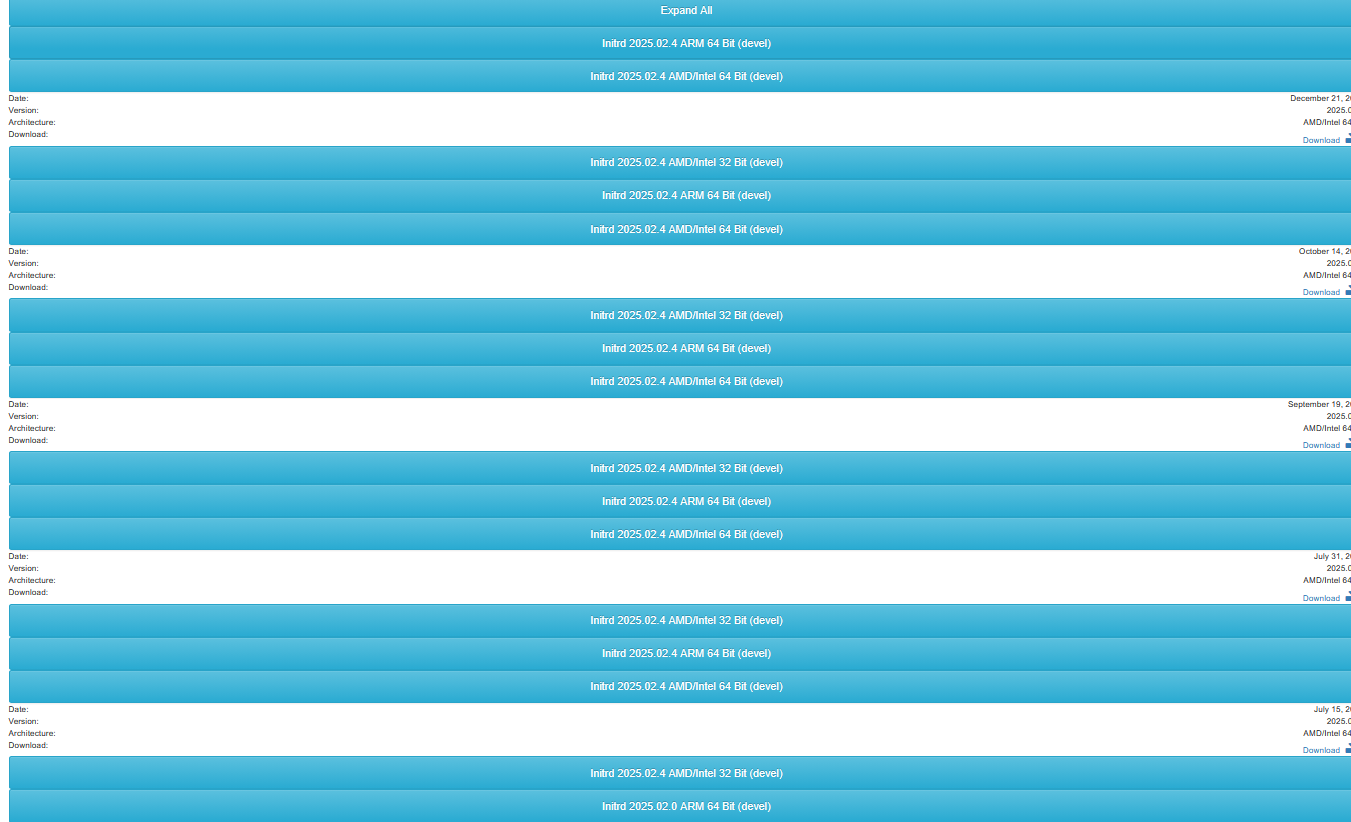

I noticed that updating also starts using latest Initrd. (2025.02.4)Things are broken with latest Initrd. I set it back to 2024.02.9 AMD/Intel 64 bit and it is working again.

Then i updated Initrd to 202502.0 AMD/Intel 64 bit and it is still working.Strange thing is that in webinterface the latest version is shown multiple times.

When I open and download the upper one during pxe boot I see version 20251221 loaded. That one is not working.Is there anywhere to analyse what the differences are?

I also want to add that we were focussing on nvme. But VROC doesn’t seem to work with nvme in raid.

There are sata ssd/nvme in those slots.