Problem Capturing right Host Primary Disk with INTEL VROC RAID1

-

@Ceregon I’ve never messed with cloning a raid array. Anything can be done, but whether or not it’s going to work with built-in stuff is a different question.

I imagine you have vroc/vmd enabled in the bios on the machine where you’re deploying already. I’ve never got to play with Vroc but I’m familiar with it, just wasn’t able to convince management to buy me the stuff to try it a few years back.

My first guess is that /dev/md124 doesn’t exist because the raid volume doesn’t exist yet, but it sounds like you found that in a debug session on a host you’re trying to deploy too. So that’s probably out. But I just wonder if the VROC volume needs to be created beforehand to be deployed to, but I don’t have a full understanding of when that volume is made.My next guess would be that a RAID array is a multiple disk system, so the image needs to be captured in multiple disk mode

Are you having different disk sizes for these RAID volumes? would capturing with multiple disk or dd be an option?

In theory a RAID is a single volume, and you may be able to capture it correctly and it sounds like you’ve found others in the forum that have done that?Other possibility is the need for different VROC drivers in the bzImage kernel, but I feel like if that was the case, then you wouldn’t be able to see the disk at all when capturing.

You could also capture in debug mode and mount the windows drive before starting the capture to see if you can read stuff?

This is from part of a postdownload script that will mount the windows disk to the path/ntfs. /usr/share/fog/lib/funcs.sh mkdir -p /ntfs getHardDisk getPartitions $hd for part in $parts; do umount /ntfs >/dev/null 2>&1 fsTypeSetting "$part" case $fstype in ntfs) dots "Testing partition $part" ntfs-3g -o force,rw $part /ntfs ntfsstatus="$?" if [[ ! $ntfsstatus -eq 0 ]]; then echo "Skipped" continue fi if [[ ! -d /ntfs/windows && ! -d /ntfs/Windows && ! -d /ntfs/WINDOWS ]]; then echo "Not found" umount /ntfs >/dev/null 2>&1 continue fi echo "Success" break ;; *) echo " * Partition $part not NTFS filesystem" ;; esac done if [[ ! $ntfsstatus -eq 0 ]]; then echo "Failed" debugPause handleError "Failed to mount $part ($0)\n Args: $*" fi echo "Done"Also, hot tip, once you’re in debug mode, you can run

passwdand set a root password for that debug session. Then runifconfigto get the ip. Then you can ssh into your debug session withssh root@ipthen put in the password you set when prompted. Then you can copy and paste this stuff and it’s a lot easier to copy the output or take screenshots.Another possibilty could be using pre and post download scripts to fix the raid volume in the linux side, I found this information https://www.intel.com/content/dam/support/us/en/documents/memory-and-storage/linux-intel-vroc-userguide-333915.pdf but I didn’t dig into to that too much.

-

Thanks for your input. I play around a bit.

-

So i think i got a solution.

With “Host Primary Disk = /dev/md124” i could never let Partclone capture the whole drive of the RAID 1 i needed, because it always jumps to /dev/md0.

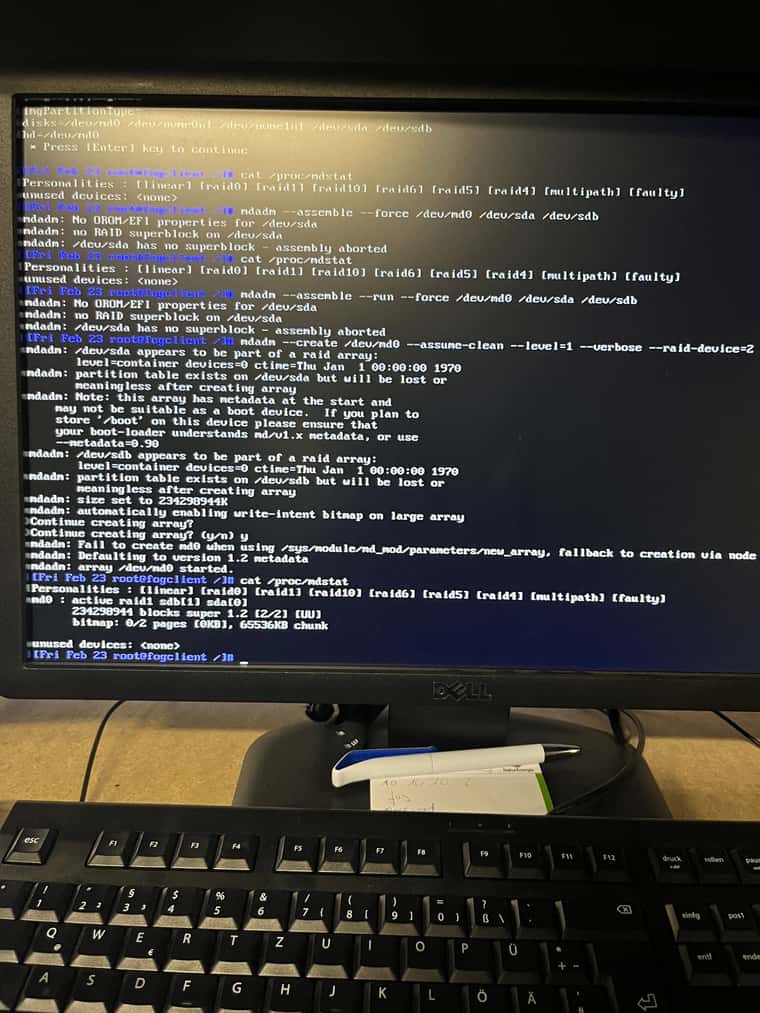

So i tinkered around a bit and first tried to stop the RAID with:

mdadm --stop /dev/md124After that i made it reassemble at md0 with:

mdadm --assemble --run --force /dev/md0 /dev/sda /dev/sdbCapturing went fine. But when i tried to deploy to the same machine i got the error “Fsync error: errno 5”

After some searching around i tried to recreate the array with:

mdadm --create /dev/md0 --assume-clean --level=1 --verbose --raid-devices=2 /dev/sda /dev/sdbThis let me deploy the image without a partclone error. But after all partitions got written i got a mount error. A reboot into the Windows got me a bluescreen. So i assumed i had a dead end here. Has maybe something to do with the Supberblock.

So i took in the idea of mounting the drive what brought me finally to Symlinks.

/dev/md0 get’s initialized but is empty. I personally don’t really now why it’s there.

So i just removed it:

rm /dev/md0And created my Symlink:

ln -s /dev/md124 /dev/md0This way i am abled to capture the image with the Setting "Multiple Partition Image - Single Disk (Not Resizable) without an error or a warning.

The deployment went good. System boots and the RAID 1 is fine. Will try on monday “Single Disk Resizable” capture and deployment. After that i will try to implement the commands in a postinit script. Maybe i can work with groups of hosts to decide when this postinit script is used. I haven’t read myself into that part of fogproject yet.

Also it seemed that one partition of my disk (40 GB / NTFS / 64k Clustersize) get’s borked at capture. Partclone recognized it as raw. After the deployment i had to format that partition again.

I am not 100% sure. Maybe i got something messed up before capture and the partition was borked from the beginning. Will check on that.

If that’s not the case i had the idea to run a postdownload script to format that partition and create some folders for me.

Besides a folder structure we prepared for a database server the partition is an empty placeholder anyway.

-

@Ceregon said in Problem Capturing right Host Primary Disk with INTEL VROC RAID1:

Maybe i can work with groups of hosts to decide when this postinit script is used

It is possible to access FOG system variables in a pre install script (where I would recommend you do any initialization needed for mdm arrays). So you could use like fog user field 1 or 2 (in the host definition) to contain something that indicates you need to init the mdm array. You might also be able to key it off of the target device name too. I have not looked that deep into it, but it should be possible at least on the surface.

-

@Ceregon said in Problem Capturing right Host Primary Disk with INTEL VROC RAID1:

Also it seemed that one partition of my disk (40 GB / NTFS / 64k Clustersize) get’s borked at capture. Partclone recognized it as raw. After the deployment i had to format that partition again.

So after a few tests i can say, that a freshly formated parttion with that parameters is borked on the source-host directly after capturing.

The deployed image also has the borked partition. Maybe the clustersize is the problem here? Sadly we need it.

So i guess we will have to manually format the partition and set the correct NTFS-rights.

-

I try the same.

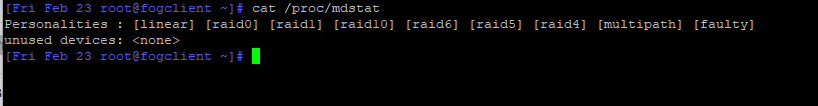

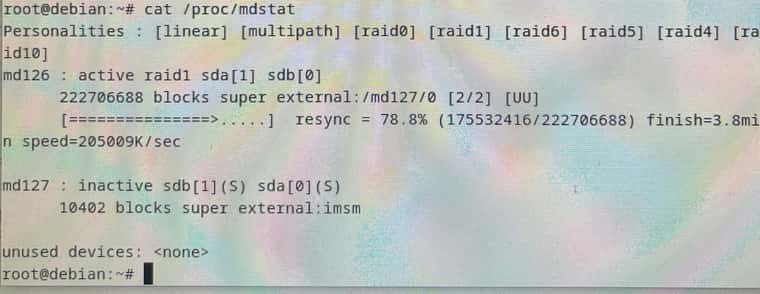

We have one global Win10 Image “Single Disk Resizable” and I want to deploy the Image to the Intel VROC Raid1.In the Debug mode Cat /proc/mdstat ist empty.

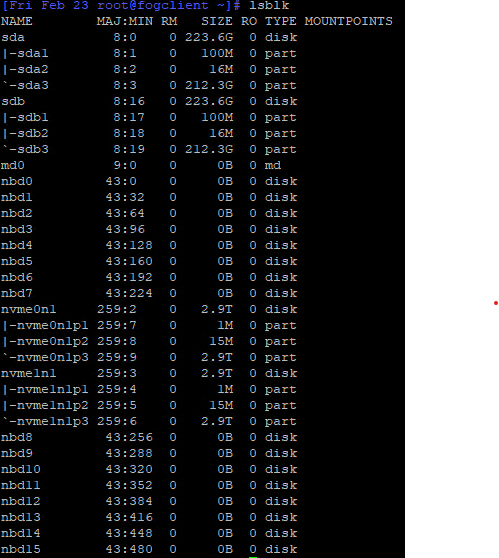

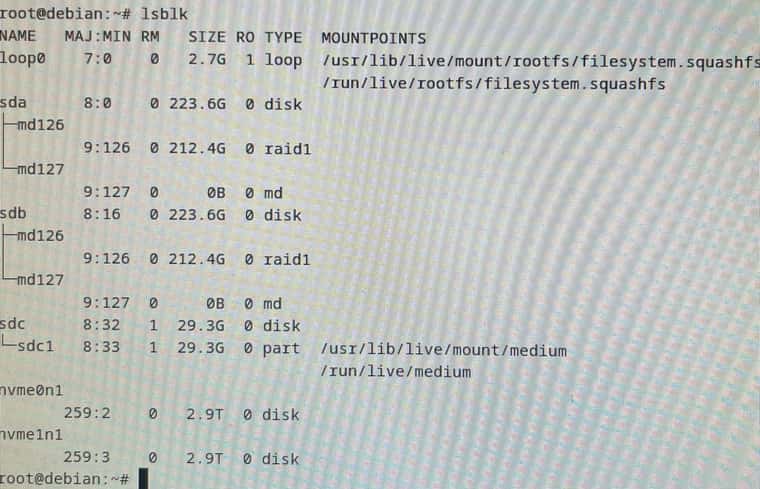

But with lsblk I find also the md0

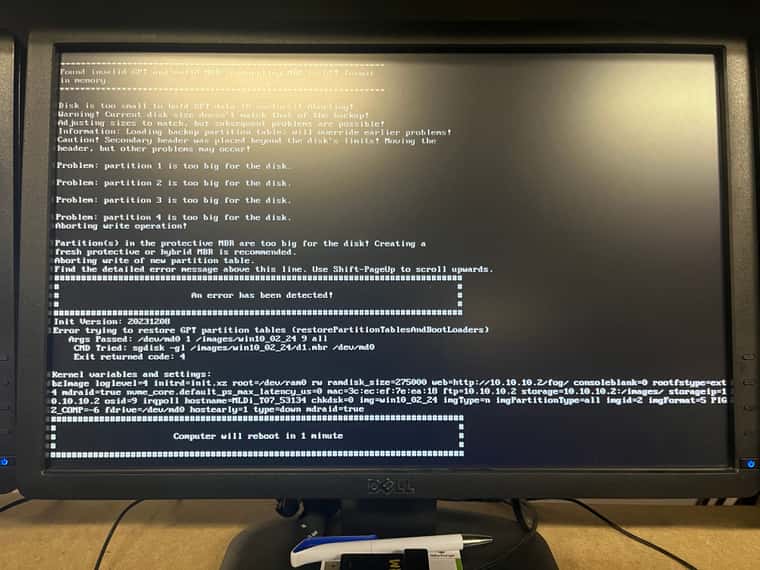

when I upload the image I get the following error.

I don’t think Fos recognises the raid correctly.

Any ideas here? -

@nils98 from the cat command it doesn’t look like your array was assembled. From a previous post it looks like you found a command sequence with mdadm to assemble that array.

If you schedule a debug deploy (tick the debug checkbox before hitting the submit button). pxe boot the target computer and that will put you into debug mode. Now type in the command sequence to assemble the array. Once you verify the array is assembled key in

fogto begin the image deployment sequences. You will have to press enter at each breakpoint. This will give you a chance to see and possibly trap any errors during deployment. If you find an error, press ctrl-C to exist the deployment sequence and fix the error. Then restart the deployment sequence by pressingfogonce again. Once you get all the way through the deployment sequence and the target system reboots and comes into windows. You have established a valid deployment path.Now that you have established the commands needed to build the array before deployment, you need to place those commands into a pre deployment script so that the FOS engine executes them every time a deployment happens. We can work on the script to have it execute only under certain conditions, but first lets see if you can get 1 good manual deployment, 1 good auto deployment, and then the final solution.

-

@george1421 I have now tried to create a new RAID via the Fog. But unfortunately it doesn’t work properly. It creates something under /dev/md0 but Fog cannot find it, even with lsblk /dev/md0 no longer appears.

In addition, I can no longer see the RAID in the BIOS. I can then create a new one here, which Fog sees under “lsblk”, but again it can’t do anything with it.I found a few more commands in the Intel document, but all of them only produce errors.

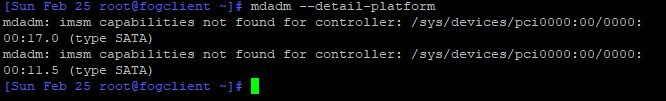

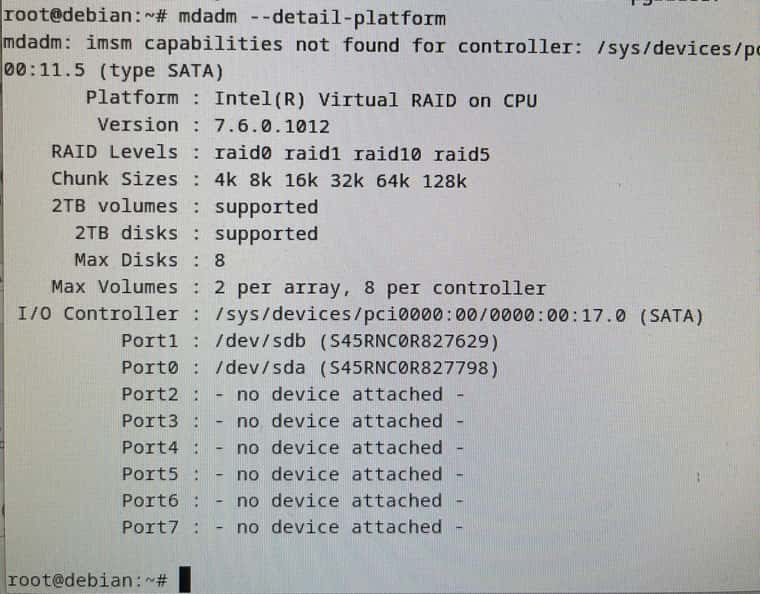

“mdadm --detail-platform” for example.

Is there a way I can load the drivers from the Vroc into the Fog?

I’m not that experienced with this, but I think it would solve some of my problems. -

@nils98 I guess lets run these commands to see where they go.

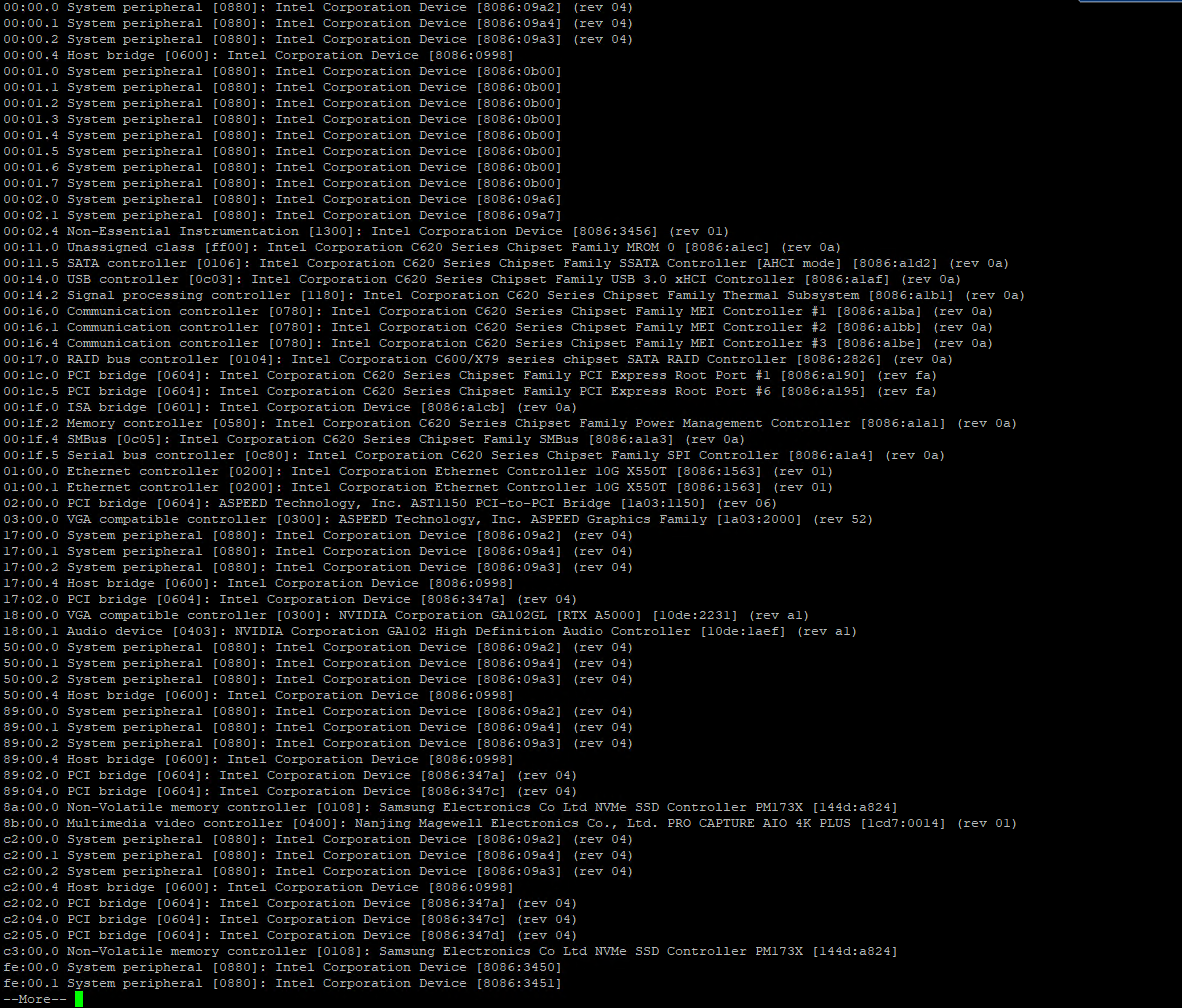

lspci -nn| moreWe want to look through the output. We are specifically looking for hardware related to the disk controller. Normally I would have you look for raid or sata but I think this hardware is somewhere in between. I specifically need the hex code that identifies the hardware. It will be in the form of [XXXX:XXXX] where the X’s will be a hex value.The output of

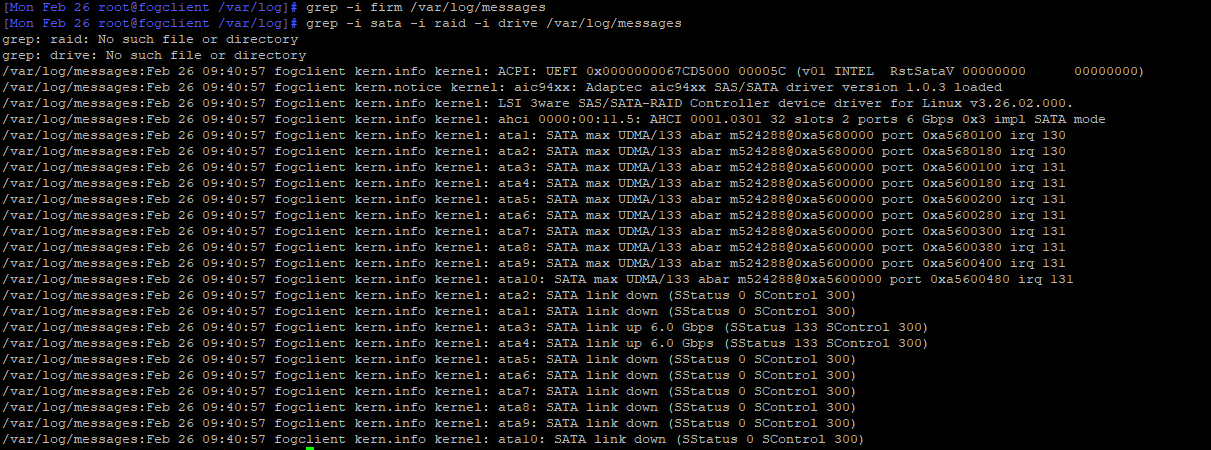

lsblkThen this one is going to be a bit harder but lets run this command, but if it doesn’t output anything then you will have to manually look through the log file to see if there are any messages about missing drivers.

grep -i firm /var/log/syslogThe first one will show us if we are missing any supplemental firmware needed to configure the hardware.grep -i sata -i raid -i drive /var/log/syslogThis one will look for those keywords in the syslog.If that fails you may have to manually look through this log.

-

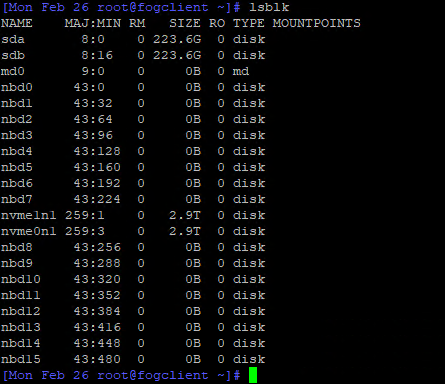

@george1421 Here is the output.

lblk

Unfortunately there is no syslog, but I found something under /var/log/messages.

I hope it is ok that I just send the screenshots

If you want me to look for more in the messages, please let me know -

@nils98 There are a few interesting things in here, but nothing remarkable. I see this is a server chassis of some kind. I also see there is sata and nvme disks in this server. A quick look of vroc and this is designed for nvme drives and not sata and this is on cpu raid.

Is your array with the sata drives /dev/sda and /dev/sdb or with the nvme drives?

I remember seeing something in the forums regarding the intel xscale processor and vmd. I need to see if I can find those posts.

For completeness, what is the manufacturer and model of this server. What is the target OS for this server. Did you setup the raid configuration in the bmc or firmware, so the drive array is already configured?

And finally if you boot a linux live cd does it properly see the raid array.

Lastly for debugging with FOS linux if you do the following you can remote into the FOS Linux system.- PXE boot into debug mode (capture or deploy)

- Get the ip address of the target computer with

ip a s - Give root a password with

passwdjust make it something simple like hello it will be reset at next reboot. - now with putty or ssh you can connect to the fos linux engine to run commands remotely. This makes it easier to copy and paste into the fos linux engine.

-

@nils98 I don’t know your prerequisities.

Our machines get delivered with a preinstalled windows.

The RAID1 is also already assembled.

We do not create a raid 1 via mdadm in fog. Also i did not inject any drivers for VROC.

I think /dev/md0 get’s created because of the use of the kernel-parameter “mdraid=true” but it’s empty.

If you check in bios/uefi. Is there a raid 1 shown? If not can you create one? I never had problems to see my preassembled VROC raid1 with “lsblk” in debug mode.

-

@george1421 the Vroc Raid is created via the Sata /dev/sda and /dev/sdb.

The Nvme are only content discs that are later connected to Windows.The board is a Supermicro X12SPI-TF running Windows110.

Last time we created the raid with the Windows Install. But I had already created it via the bios. Actually it is already created and I don’t want to touch it with the Fog

I will test Linux live later.

I had already read about connecting via Putty here, thanks.@Ceregon That was exactly the same for us.

The bios shows me a Raid 1 with both SSDs and I can also create a new one there if necessary.

as you can see above, no raid is listed via “lsblk”. -

@george1421 with Debian 12 live I recognize the raid and vroc

any ideas what I can change in the VOS to make it look exactly like this?

-

@nils98 Nice, this means its possible with the FOG FOS kernel. If the linux live cd did not work then you would be SOL.

OK so lets start with (under the live image) lets run this commands.

lsmod > /tmp/modules.txt

lspci -nnk > /tmp/pcidev.txtuse scp or winscp on windows to copy these tmp files out and post them here. Also grab the /var/log/messages or /var/log/syslog and post them here. Let me take a look at them to see 1) what dynamic modules are loaded and/or the kernel modules linked to the PCIe devices.

-

@george1421 Here are the files.

Unfortunately I have not found a messages or syslog file, I have only found a boot log file in the folder. -

@nils98 Nothing is jumping out at me as to the required module. The VMD module is required for vroc and that is part of the FOG FOS build. Something I hadn’t asked you before, what version of FOG are you using and what version of the FOS Linux kernel are you using? If you pxe boot into the FOS Linux console then run

uname -ait will print the kernel version. -

@george1421

FOG currently has version 1.5.10.16.

FOS 6.1.63

I set up the whole system a month ago. I only took over the clients from another system, which had FOG version 1.5.9.122.The Raid PC has now been added.

-

@nils98 said in Problem Capturing right Host Primary Disk with INTEL VROC RAID1:

FOS 6.1.63

OK good deal I wanted to make sure you were on the latest kernel to ensure we weren’t dealing with something old.

I rebuilt the kernel last night with what thought might be missing, then I saw that mdadm was updated so I rebuilt the entire fos linux system but it failed on the mdadm updated program. It was getting late last night so I stopped.

With the the linux kernel 6.1.63, could you pxe boot it into debug mode and then give root a password with

passwdand collect the ip address of the target computer withip a sthen connect to the target computer using root and password you defined. Download the /var/log/messages and/or syslog if they exist. I want to see if the 6.1.63 kernel is calling out for some firmware drivers that are not in the kernel by default. If I can do a side by side with what you posted from the live linux kernel I might be able to find what’s missing. -

@george1421 here is the message file