nvme devices detect in random order / changing hd variable on the fly

-

FOG Web Server 10.15.9.4

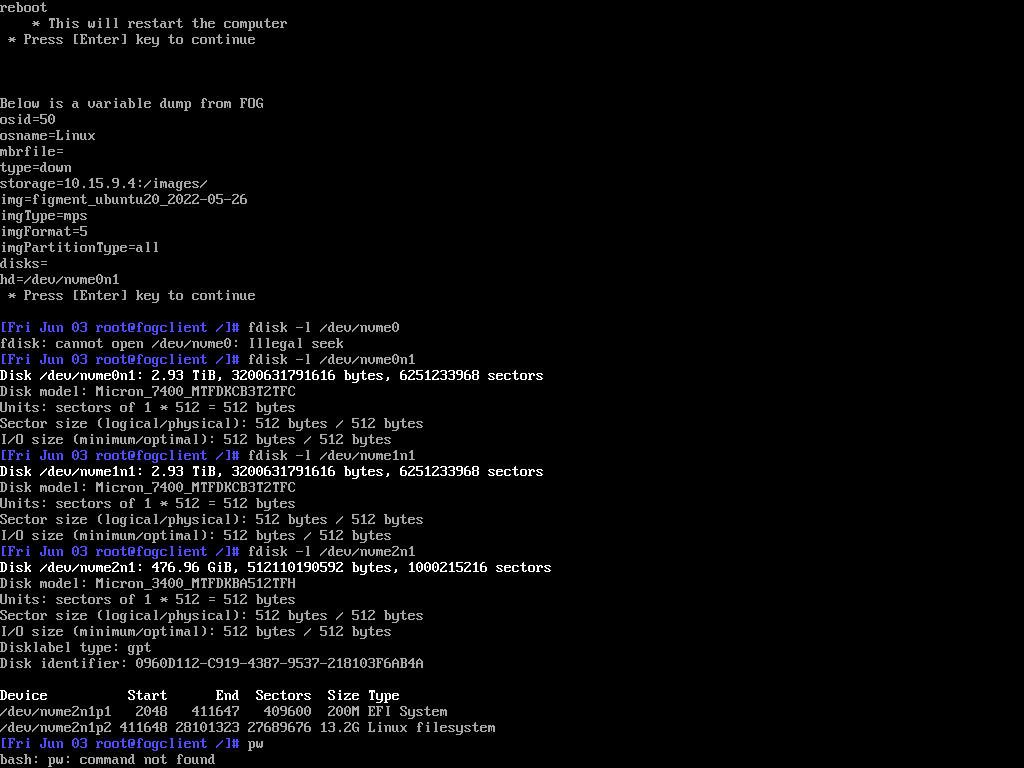

Ubuntu 18.04.6 LTSI’m deploying to a small number of hosts with identical hardware. These hosts have 3 nvme drives, which are detected as nvme0n1, nvme1n1 and nvme2n1. One of these is ~500GB and the other two are ~3TB:

Disk /dev/nvme0n1: 2.93 TiB, 3200631791616 bytes, 6251233968 sectors Disk /dev/nvme1n1: 2.93 TiB, 3200631791616 bytes, 6251233968 sectors Disk /dev/nvme2n1: 476.96 GiB, 512110190592 bytes, 1000215216 sectorsMy objective is to deploy my image to the small nvme drive. The challenge is that each time I reboot, these drives are detected in random order, so designating the group or host primary disk does not deploy the image to the correct drive in all cases.

Is it possible to change the ‘hd’ variable on the fly, such as during a debug deploy task? Or is there a better approach? I could ask remote hands to disconnect the larger drives during deploy, but a software-based intervention is preferred.

-

I didn’t find a solution, but went with the workaround of designating a host primary disk, then creating a debug deploy task. Once booted, I was able to check nvme order. If correct, I ran ‘fog’ to deploy. If incorrect, I rebooted the host and tried again. Time consuming, but the outcome was correct.

-

I wonder how many people want to deploy to systems with multiple disks? I also wonder how hard it would be to give fog information on what disk to read the image from, and what disk to write the image to. Maybe a checkbox for “my source and destination systems have multiple disks”. This would then open some additional menus in the FOG UI where some additional detail could be added like expected disk size, maybe other details.

-

@david-burgess @Wayne-Workman Using the latest dev-branch version you should be able to use disk size or WWN or disk identifier to force disk order. It’s a known issue that NVMe drives get enumerated in different order by the Linux kernel.

Search the forums for “nvme wwn” and you should find a topic with helpful information on this.