Identical NVMe drives

-

@sebastian-roth Yes, I’m thinking of disk UUIDs or the serial number.

-

@mrp When we struggled with this I didn’t think as far as only using identifiers for deployment. I will see what I can do when I get back from my holiday in a couple of days.

-

@sebastian-roth I hope you had a pleasant holiday. Any updates on this issue, or should I maybe open an issue on GitHub to track this?

-

@mrp No, unfortunately have not found enough time to work this out. But I have it on my list and will get to it in the next two weeks I reckon.

-

@mrp Sorry for the delay. Found some time to work on this finally.

Here you can download a first test version of the updated init file: https://github.com/FOGProject/fos/releases/download/20210807/init_adv_primary_disk.xz (will be removed as soon as we have enough evidence this is working as intended and not breaking anything else and therefore included into the official init)

You can use any combination of disk serial (

lsblk -pdno SERIAL /dev/sda), WWN (lsblk -pdno WWN /dev/sda), disk size (blockdev --getsize64 /dev/sda) or simple device name (/dev/sda) as Host Primary Disk setting, disk entries separated by comma, for exampleS1DBNSBFXXXXXXXX,549755813888,/dev/sdc(serial,size,device name).@mrp @testers May I ask you to give it a try and see if it works as expected. Please test as many different setups as possible (capture/deploy, All Disk/non-resizeable/resizeable image type, unicast/multicast) to make sure this change doesn’t break other parts.

-

@sebastian-roth No problem, I’m glad we have a fix for this now. The Host Primary Disk setting with the disks separated with commas is exactly what we wanted, so I’m enthusiastic to test the fix.

I have some time next week, so I’ll do some captures and deployments on the 13th of October and test the new init file. I’ll come back to you then with our results.

-

@mrp I just added another fix to the init. Make sure you re-download the file from the link below (updated the file) before you start testing.

-

@sebastian-roth Yesterday I downloaded the init file (init_adv_primary_disk.xz) and replaced the /var/www/html/fog/service/ipxe/init.xz file with the downloaded one.

I did multiple single and multi-disk deployments (multiple partition not resizable) as well as multiple single and multi-disk captures (multiple partition not resizable). I specified the order of disks using the Host Primary Disk field (wwn(nvme),wwn(nvme),device-name(sata) ; serial(nvme),serial(nvme),device-name(sata) ; also single disk capture and deploy by specifying a single disk like serial(nvme) or wwn(nvme)).

With the new init file, the NVME drives still got picked up seemingly randomly in all tested scenarios.

When working on single NVME drives (specified with serial or WWN), the partclone progress screen always showed /dev/nvme0n1 being captured/deployed, regardless of the NVME drive being specified in FOG and the drive that was picked by FOG.

I tested both with the normal setup (2xNVME, 1xSATA) and without the SATA drive. Interestingly, when testing with (2xNVME, 1xSATA) and specifying the Host Primary Disk like WWN1,WWN2,sata_device_name (or the same with serial), the capture/deployment always started with the SATA drive and then continued with the two NVME drives.

Maybe the serial and WWN entries were found to be invalid and/or simply skipped by FOG? The WWNs and serials were correct, I double checked each of them.

-

@mrp Thanks heaps for testing and letting me know!

Can you post the actual information you set as Host Primary Disk in the FOG web UI for the specific hosts? Just a few examples.

-

@sebastian-roth I sent you the examples with WWNs and serials in chat.

-

@mrp said:

Yesterday I downloaded the init file (init_adv_primary_disk.xz) and replaced the /var/www/html/fog/service/ipxe/init.xz file with the downloaded one.

Now that I think about it again I am wondering if you checked the

Init Versionnumber shown on boot up? Just to make sure it’s the correct file used. -

@sebastian-roth With the replaced init file it showed Init Version 20211009 during captures/deployments. Sadly, I did not think of checking whether the init version has changed. We have FOG 1.5.9, what is the default init version of that release?

-

@mrp said in Identical NVMe drives:

With the replaced init file it showed Init Version 20211009 during captures/deployments.

Perfectly fine! It’s the latest as of now.

We have FOG 1.5.9, what is the default init version of that release?

Not shure exactly, but more like 20200906 - definitely a huge difference to the 20211009 you have now.

I will look into this over the weekend!

-

@mrp Found some time to look into this again. The issue I have with testing this is that neither serial nor WWN can be looked up by

lsblkin my virtualbox setup. I have no idea why this is the case. Tools lilke udevadm, hdparm and so an show serial and WWN but lsblk does not. So in my tests I am using the disk size (blockdev --getsize64 /dev/sda) as parameter and it works.I added some debug statements to the scripts to further debug this. Please download the updated init, place it on your FOG server, schedule a debug deploy task and boot the host up. After the PXE boot you should see this message: “Trying to sort enumerated disks according to Host Primary Disk setting” - New init version is 20211025.

Please take a picture of the screen where you see this message and post that here in the forums.

-

@sebastian-roth Sorry for the delay, today I had some time to test this.

Please download the updated init, place it on your FOG server, schedule a debug deploy task and boot the host up.

I tested the new init with debug tasks with the following host primary field values:

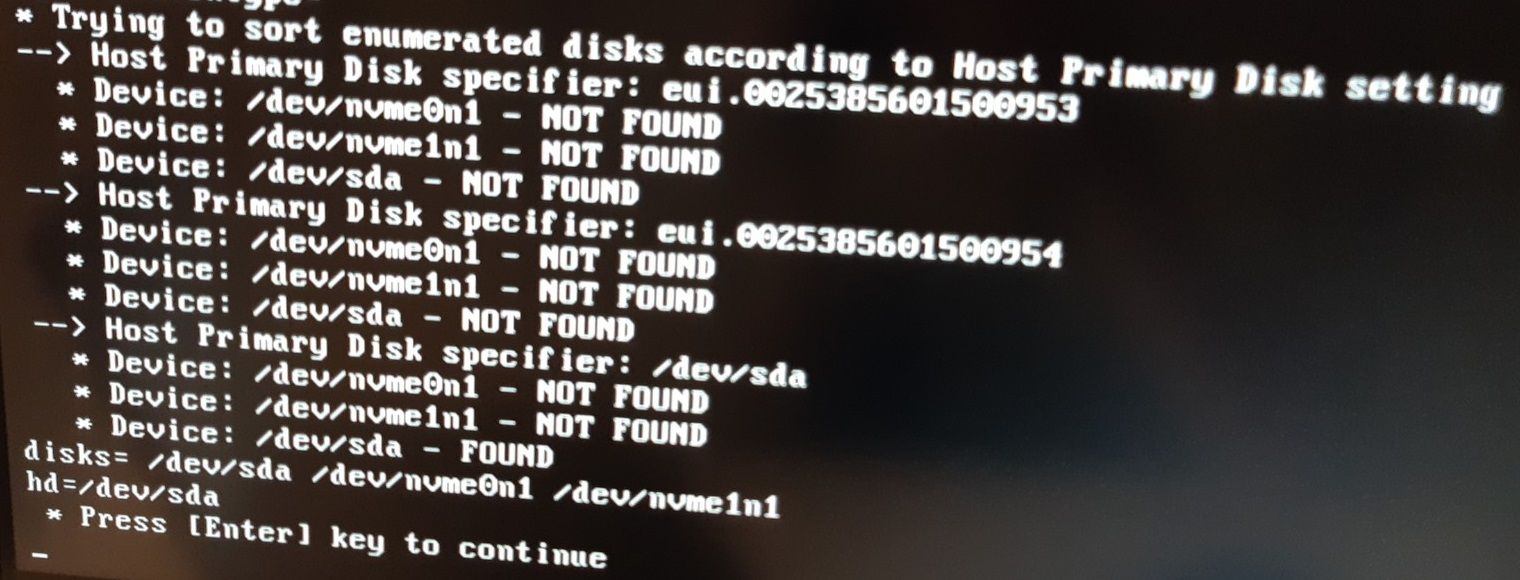

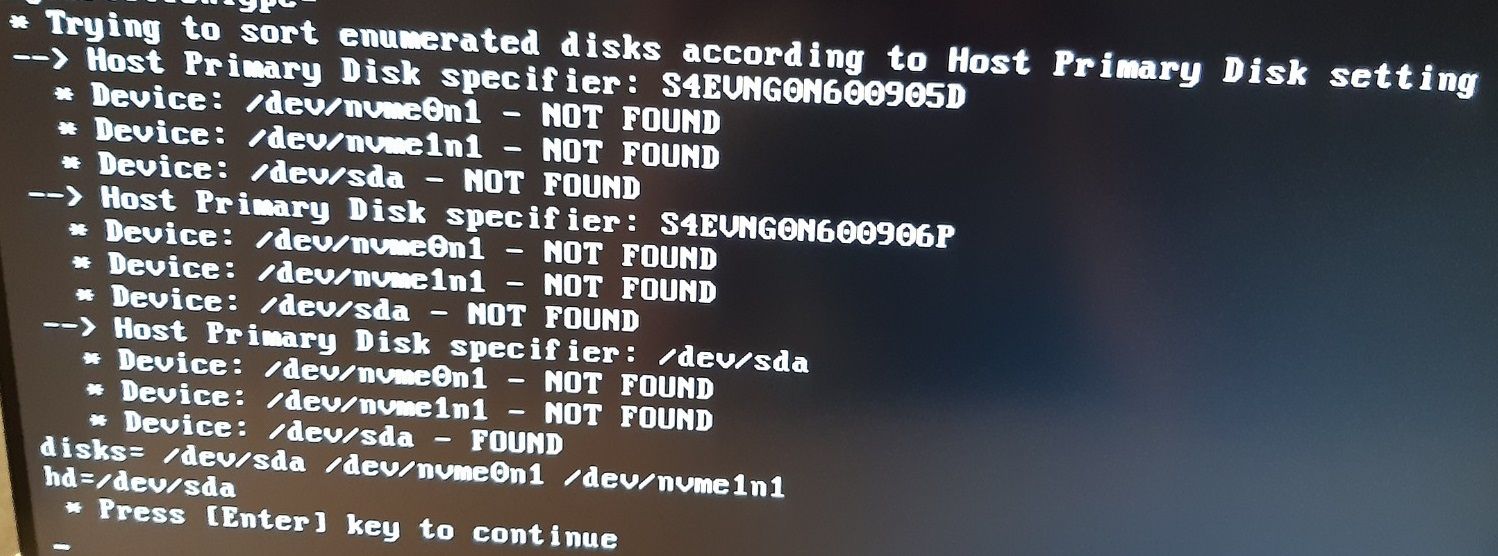

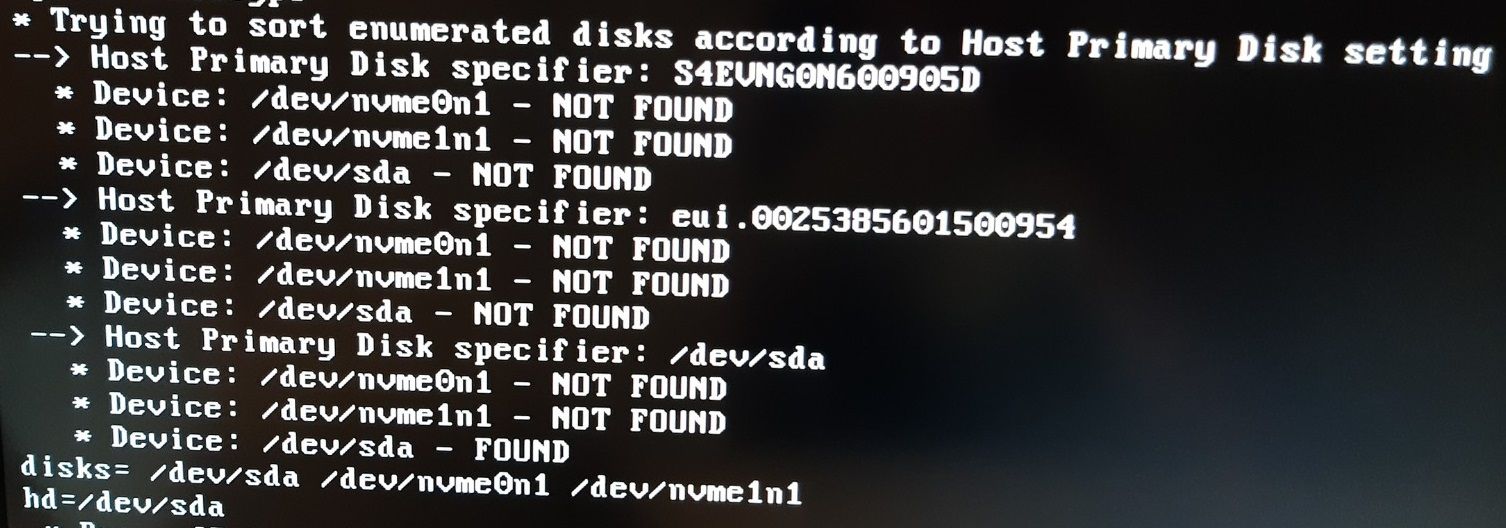

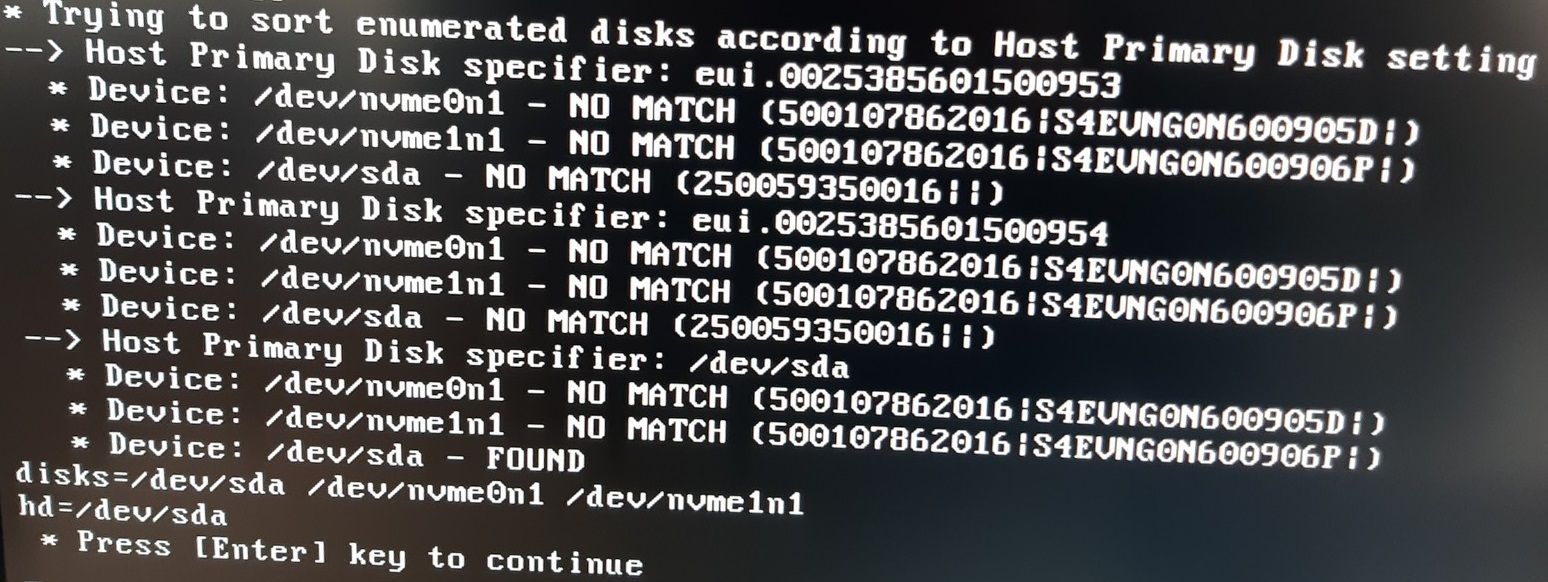

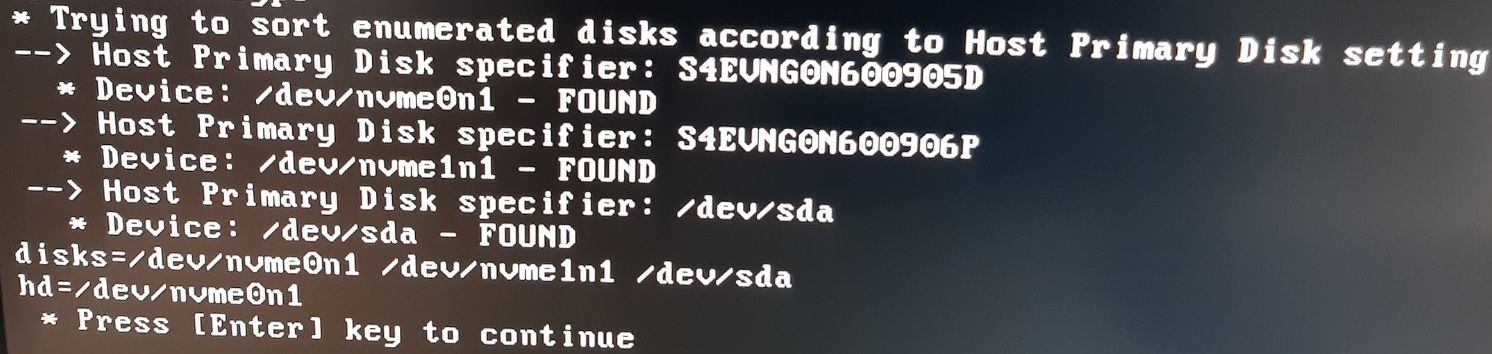

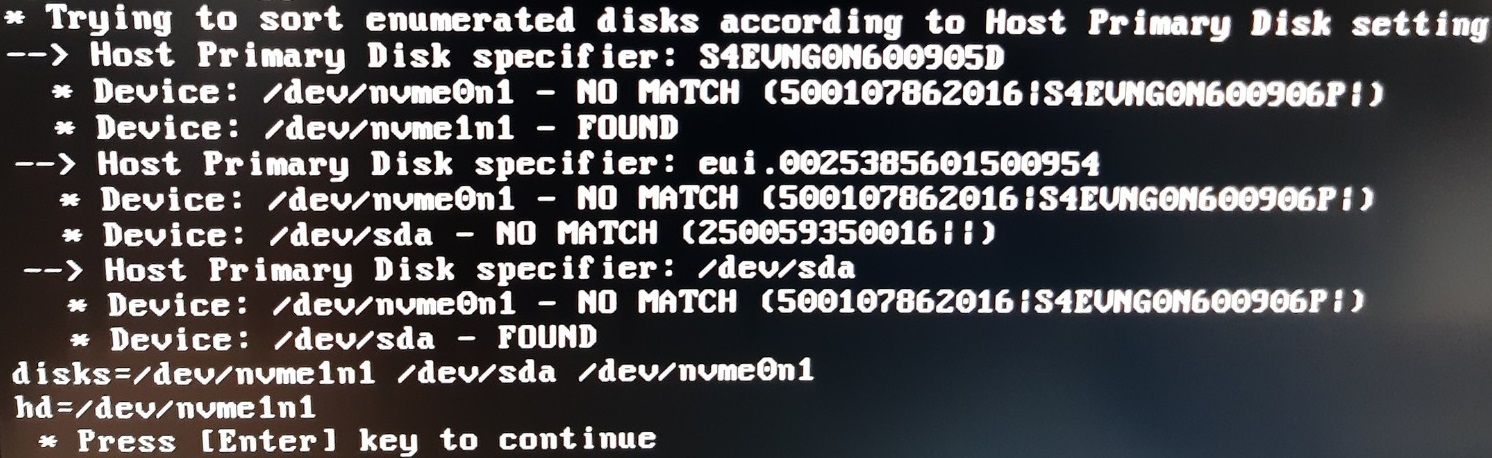

serial(nvme1),serial(nvme2),device-name(sata) = eui.0025385601500953,eui.0025385601500954,/dev/sda

wwn(nvme1),wwn(nvme2),device-name(sata) = S4EVNG0N600905D,S4EVNG0N600906P,/dev/sda

wwn(nvme1),serial(nvme2),device-name(sata) = S4EVNG0N600905D,eui.0025385601500954,/dev/sda

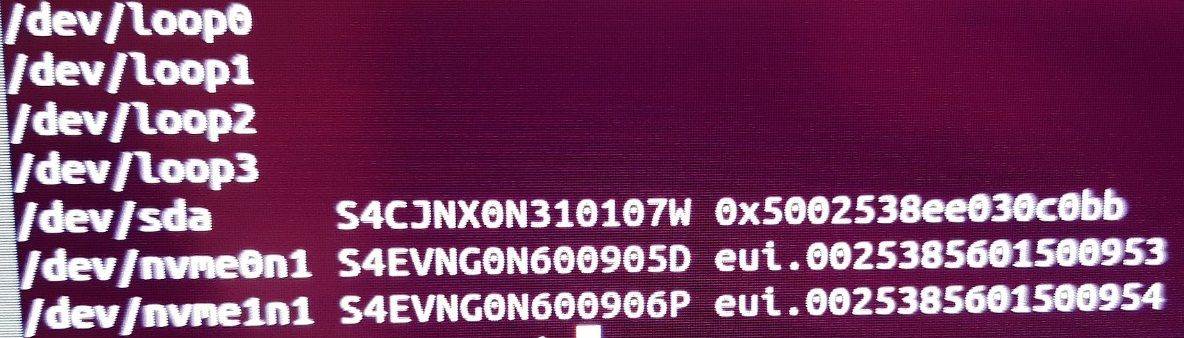

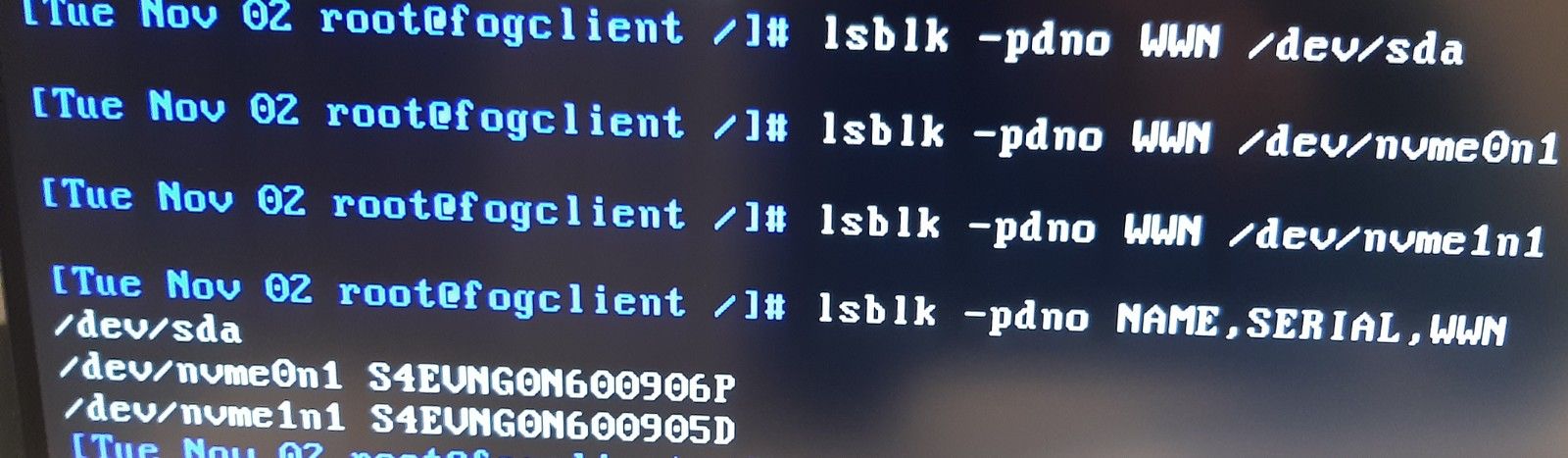

Just for double checking, I also checked lsblk -pdno NAME,SERIAL,WWN again:

-

@mrp Thanks for the pictures, great documentation of the test and your patience!!

Find another updated init binary on github that might possibly solve the issue but if not it will definitely give us further insight on why it doesn’t work with serial and WWN yet.

-

@sebastian-roth I tested the same debug tasks with the new init. It seems that the WWN cannot be retrieved by the init, but with the serial it seems to work now.

Find another updated init binary on github that might possibly solve the issue

serial(nvme1),serial(nvme2),device-name(sata) = eui.0025385601500953,eui.0025385601500954,/dev/sda

wwn(nvme1),wwn(nvme2),device-name(sata) = S4EVNG0N600905D,S4EVNG0N600906P,/dev/sda

wwn(nvme1),serial(nvme2),device-name(sata) = S4EVNG0N600905D,eui.0025385601500954,/dev/sda

-

@mrp Thanks again for testing. Looks better now but still not perfect. I wonder why it doesn’t find the WWN at all. Can you please run the following commands in a debug command prompt and post output here:

lsblk -pdno WWN /dev/sda lsblk -pdno WWN /dev/nvme0n1 lsblk -pdno WWN /dev/nvme1n1 -

@sebastian-roth No problem, here it is:

-

@mrp Ah well I see. Our FOS

lsblkcommand is not able to retrieve the WWN information. Too bad. Not sure if there is anything we can do about it. Good you can use the serial numbers for now.Would be interesting to see if this is not working in general or only might be an issue on your computers.