Problem Capturing right Host Primary Disk with INTEL VROC RAID1

-

@george1421 here is the message file

-

@nils98 Ok there have been a few things I gleaned by looking over everything in details.

The stock FOS linux kernel looks like its working because I see this in the messages file during boot. I do see all of the drives being detected.

Mar 1 15:46:40 fogclient kern.info kernel: md: Waiting for all devices to be available before autodetect Mar 1 15:46:40 fogclient kern.info kernel: md: If you don't use raid, use raid=noautodetect Mar 1 15:46:40 fogclient kern.info kernel: md: Autodetecting RAID arrays. Mar 1 15:46:40 fogclient kern.info kernel: md: autorun ... Mar 1 15:46:40 fogclient kern.info kernel: md: ... autorun DONE.This tells me its scanning but not finding an existing array. It would be handy to have the live CD startup file to verify that is the case.

Intel VROC is the rebranded Intel Rapid Store Technology [RSTe]

There is no setting for

CONFIG_INTEL_RSTin the current kernel configuration file: https://github.com/FOGProject/fos/blob/master/configs/kernelx64.config Its not clear if this is a problem or not, but just connecting the dots between VROC and RSTe: https://cateee.net/lkddb/web-lkddb/INTEL_RST.html I did enable it in the test kernel belowTest kernel based on linux kernel 6.6.18 (hint: newer kernel that is available via fog repo).

https://drive.google.com/file/d/12IOjoKmEwpCxumk9zF1vtQJt523t8Sps/view?usp=drive_linkTo use this kernel copy it to /var/www/html/fog/service/ipxe directory and keep its existing name. This will not overwrite the FOG delivered kernel. Now go to the FOG Web UI and go to FOG Configuration->FOG Settings and hit the expand all button. Search for bzImage, replace bzImage name with bzImage-6.6.18-vroc2 then save the settings. Note this will make all of your computers that boot into fog load this new kernel. Understand this is untested and you can always put things back by just replacing bzImage-6.6.18-vroc2 with bzImage in the fog configuration.

Now pxe boot into a debug console on the target computer.

Do the normal routine to see if lsblk and

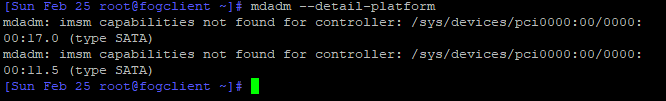

cat /proc/mdstatandmdm --detailed-platformreturns anything positive.If the kernel doesn’t assemble the array correctly then we will have to try to see if we can manually assemble the array using mdadm tool.

I should say that we need to ensure the array already exists before we perform these test because if the array is defunct or not created we will not see it with the above tests.

-

@george1421 Unfortunately, nothing has changed.

“mdm --detailed-platform” does not find “mdm” with “mdadm --detail-platform” it still shows the same error.

I have also searched the log files under the live system again but unfortunately found nothing.

-

@nils98 Well that’s not great news. I really thought that I had it with including the intel rst driver. Would you mind sending me the messages log from booting this new kernel? Also make sure when you are in debug mode that you run

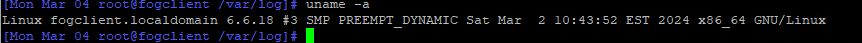

uname -aand make sure the kernel version is right. -

-

I apologise for not getting in touch for so long.

But I was able to find startup logs with Ubuntu live and my raid is recognised directly.

Hope the logs help. -

This post is deleted! -

Hi everyone, reading the investigations allready done gives me a feeling you got close to a fix to this.

I got the experimental vroc file from the download link earlier in this topic.

I have exactly the same issues, Intel VROC / Optane with 2 NVME in raid1.

I can see the individual nvme’s but not the raid array/volume.Is there anywhere near to be expected a fix for this?

-

@rdfeij For the record, what computer hardware do you have?

-

@george1421

SuperMicro X13SAE-F server board with Intel Optane / VROC in raid1 mode.

2x NVME in raid1. -

With me yes: in bios raid1 exists over 2 nvme’s

mdraid=true is enabledmd0 indeed is empty

lsblk only shows content on the 2 nvme but not with md0I hope this will be fixed soon, otherwise we are forced to another (WindowsPE based?) imaging platform since we get more and more VROC/Optane servers/workstations with raid enabled (industrial/security usage).

I’m willing to help out to get this solved.

-

yes: in bios raid1 exists over 2 nvme’s

mdraid=true is enabledmd0 indeed is empty

lsblk only shows content on the 2 nvme but not with md0I hope this will be fixed/solved soon, otherwise we are forced to another (WindowsPE based?) imaging platform since we get more and more VROC/Optane servers/workstations with raid enabled (industrial/security usage).

I’m willing to help out to get this solved.

-

@rdfeij said in Problem Capturing right Host Primary Disk with INTEL VROC RAID1:

@george1421

SuperMicro X13SAE-F server board with Intel Optane / VROC in raid1 mode.

2x NVME in raid1.In addition:

the NVMe raid controller id is 8086:177f ( https://linux-hardware.org/?id=pci:8086-a77f-8086-0000 )

0000:00:0e.0 RAID bus controller [0104]: Intel Corporation Volume Management Device NVMe RAID Controller Intel Corporation [8086:a77f]RST controller, i think it is involved since all other sata controllers are disabled in bios:

0000:00:1a.0 System peripheral [0880]: Intel Corporation RST VMD Managed Controller [8086:09ab]And NVMe’s: (but not involved i think;

10000:e1:00.0 Non-Volatile memory controller [0108]: Sandisk Corp WD Black SN770 NVMe SSD [15b7:5017] (rev 01)

10000:e2:00.0 Non-Volatile memory controller [0108]: Sandisk Corp WD Black SN770 NVMe SSD [15b7:5017] (rev 01) -

@rdfeij Well the issue we have is that non of the developers have access to one of these new computers so its hard to solve.

Also I have a project for a customer where we were loading debian on a Dell rack mounted precision workstation. We created raid 1 with the firmware but debian 12 would not see the mirrored device only the individual disks. So this may be a limitation with the linux kernel itself. If that is the case there is nothing FOG can do. Why I say that is the image that clones the hard drives is a custom version of linux. So if linux doesn’t support these raid drives then we are kind of stuck.

I’m searching to see if I can find a laptop that has 2 internal nvme drives for testing, but no luck as of now.

-

@rdfeij said in Problem Capturing right Host Primary Disk with INTEL VROC RAID1:

Intel Corporation Volume Management Device NVMe RAID Controller Intel Corporation [8086:a77f]

FWIW the 8086:a77f is supported by the linux kernel, so if we assemble the md device it might work, but that is only a guess. It used to be if the computer was in uefi mode, plus linux, plus raid-on mode the drives couldn’t be seen at all. At least we can see the drives now.

-

@george1421 tinkering on

As described here :https://www.intel.com/content/dam/support/us/en/documents/memory-and-storage/linux-intel-vroc-userguide-333915.pdf from chapter4 we need a raid container (in my case raid1 with 2 nvme) and within the container create a volume.

But how can i test this, debug mode doesnt let me boot to fog after tinkering

-

@george1421 said in Problem Capturing right Host Primary Disk with INTEL VROC RAID1:

@rdfeij Well the issue we have is that non of the developers have access to one of these new computers so its hard to solve.

Also I have a project for a customer where we were loading debian on a Dell rack mounted precision workstation. We created raid 1 with the firmware but debian 12 would not see the mirrored device only the individual disks. So this may be a limitation with the linux kernel itself. If that is the case there is nothing FOG can do. Why I say that is the image that clones the hard drives is a custom version of linux. So if linux doesn’t support these raid drives then we are kind of stuck.

I’m searching to see if I can find a laptop that has 2 internal nvme drives for testing, but no luck as of now.

I can give u ssh access if you want, my test box is online. But i’m further also:

I can’t post since i get a spam is detected notice when submitting… -

@george1421

I have it working, created a postinit script with:IMSM_NO_PLATFORM=1 mdadm --verbose --assemble --scan rm /dev/md0 ln -s /dev/md126 /dev/md0Although it recognizes md126 it still tries to do everything to md0, that’s why the symlink is in.

Thank to @Ceregon https://forums.fogproject.org/post/154181Tested and working with SSD Raid1, NVME raid1 and resizable en non-resizable imaging.

It would be nice if there is a possibility to select postinit scripts per host(group).

This way there is no need for difficult extra scripting to define if correct hardware is in the system. -

@rdfeij OK good you found a solution. I did find a Dell Precison 3560 laptop that has dual nvme drives. I was just about to begin testing when I see you post.

Here are a few comments based on your previous post.

-

When in debug mode either a capture or deploy you can single step through the imaging process by calling the master imaging script called

fogat the debug cmd prompt just key in fog and the capture/deploy process will run in single step mode. You will need to press enter at each breakpoint but you can complete the entire imaging process this way. -

The postinit script is the proper location to add the raid assembly. You have full access to the fog variables in the postinit script. So its possible if you use one of the other tag fields to signal when it should assemble the array. Also it may be possible to use some other key hardware characteristics to identify this system like if the specific hardware exists or a specific smbios value exists.

I wrote a tutorial a long time ago that talked about imaging using the intel rst adapter: https://forums.fogproject.org/topic/7882/capture-deploy-to-target-computers-using-intel-rapid-storage-onboard-raid

-

-

@rdfeij Well I have a whole evening into trying to rebuild the fog inits (virtual hard drive)…

On my test system I can not get fos to see the raid array completely. When I tried to manually create the array it says the disks are already part of an array. Then I went down the rabbit hole so to speak. The version of mdadm in FOS linux is 4.2. The version that intel deploys with their already built kernel drivers for redhat is 4.3. mdadm 4.2 is from 2021, 4.3 is from 2024. My thinking is that there must be updated programming in mdadm to see the new vroc kit.

technical stuff you don’t care about but documenting here.

buildroot 2024.02.1 has mdadm 4.2 package

buildroot 2024.05.1 has mdadm 4.3 package (i copied this package to 2024.02.1 and it built ok)But now I have an issue with partclone its failing to compile on an unsafe path in an include for ncurses. I see what the developer of partclone did, but buildroot 2024.02.1 is not building the needed files…

I’m not even sure if this is the right path. I’ll try to patch the current init if I can’t create the inits with buildroot 2024.05.1